Trustable Online Community Elections

Posted: 2012/08/04 Filed under: pontification 2 CommentsOne of the hallmarks of successful online communities is self-governance, typically by having some or all admins/moderators/what-have-yous come from “within” the community. Many communities select such users through a formal election process. Wikipedia and Stack Exchange (naturally) are two large examples, you’ve probably stumbled across a number of forums with similar conventions.

But this is the internet, anonymity, sock-puppetry, and paranoia run wild.

How can we prove online elections are on the up-and-up?

First, a disclaimer. Today the solution is always some variation of reputation; sock puppetry is mitigated by the difficulty inherent in creating many “trusted” users, and the talliers are assumed to be acting in good faith (they, after all, are rarely anonymous and stand to lose a lot if caught). This works pretty well, the status quo is good; what I’m going to discuss is interesting (I hope), but hardly a pressingly needed change.

Also, this is just me thinking aloud; this doesn’t reflect any planned changes over at Stack Exchange.

Modeling the threat

We need to define what we’re actually worried about (so we can proof our solution against it), and what we explicitly don’t care about.

Legitimate concerns:

- Ballot box stuffing

- Vote tampering

- Vote miscounting

Explicitly ignoring:

- Voter impersonation

- Voter intimidation

The concerns are self explanatory, we just want to make sure only people who should vote do vote, that their votes are recorded correctly, and ultimately tallied correctly.

Attacks which we’re explicitly ignoring require a bit more explanation. They all ultimately descend from the fact that these elections are occurring online, where identity is weak and the stakes are relatively low.

Consider how difficult it is to prove Jimmy Wales is actually tweeting from that account, or the one actually editing a Wikipedia article. Since identity is so much weaker, we’re assuming whatever authentication a community is using for non-election tasks is adequate for elections.

We discount voter intimidation because, for practically all online communities, it’s impractical and unprofitable. If you were to choose two Stack Overflow users at random, it’s 50/50 whether they’re even on the same side of the Atlantic much less within “driving to their home with a lead pipe”-distance. Furthermore, the positions being run for aren’t of the high-paying variety. Essentially, the risk and difficulty grossly outweigh the rewards so I feel confident in discounting the threat.

The Scheme

Before we get too deep into the technical details, I need to credit VoteBox (Sandler, Wallach) for quite a lot of these ideas.

Voter Rolls

To protect against ballot stuffing, it’s important to have a codified list of who can participate in a given election. This is a really basic idea, you have to register to vote (directly or otherwise) in practically all jurisdictions. But in the world of software it’s awfully tempting to do a quick “User.CanVote()” check at time of ballot casting, which makes it impossible to detect ballot box stuffing without consulting a historical record of everything that affects eligibility (which itself is almost impossible to guarantee hasn’t been tampered with).

Therefore, prior to an election all eligible voters should be published (for verification). Once the election starts, this list must remain unchanged.

It’s A Challenge

Vote tampering (by the software typically) is difficult to proof against, but there are some good techniques. First, all ballots must actually be stored as ballots; you can’t just “Canididate++” in code somewhere, normalized values in a database are no good to anyone; you need the full history for audits. Again, this is common sense in real world elections but needs to be explicit for online ones.

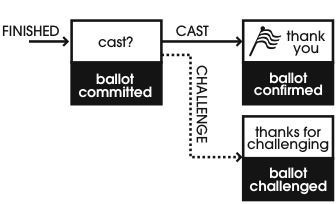

A more sophisticated approach is to make individual ballots “challenge-able”. The idea is simple: break up “casting a ballot” into two parts, “stage” and “commit/challenge”. When a ballot is staged, you publish it somewhere public (this implies some cryptography magic, which we’ll get back to later). If the voter then “commits” their vote you make a note of that (again publicly), but if they “challenge” it you reveal the contents of the ballot (this implicitly spoils the ballot, and some more crypto-magic) for verification. Obviously, if what the voter entered doesn’t match what the ballot contained something is amiss.

Challenge-able ballots make it possible for a group of voters to keep the system honest, as there’s no way for the system to know who will challenge a ballot any vote tampering carries a risk of being discovered. And with the internet being the internet news will get around quickly that something is up once tampered with ballots are being found, making the odds of challenging greater.

Freedom From Hash Chains

For challenges to work there needs to be a method for publishing “committed” ballots. We could use something low-tech like an RSS feed, mailing list, or similar but ideally we’d have some guarantee that the feed itself isn’t being tampered with. Consider the attack where some unchallenged ballots are removed from the feed long after they were published, or new ones are added as if they were cast in the past.

Our go-to solution for that is pushing each ballot (as well as some “keep alive” messages, for security reasons) into a modified hash chain. The idea is simple, every new message contains a hash of the message before it. Verifying no illicit insertions or deletions is just a matter of rehashing the chain one piece at a time and making sure everything matches. You can also take a single message and verifying that it still belongs in the chain at a later date, meaning that people who want to verify the chain don’t have to capture every message the instant it’s published.

Note that the VoteBox system goes a step further and creates a “hash mesh” wherein multiple voting machines hash each others messages, to detect tampering done from a single machine. In our model, all the machines are under the direct control of single entity and voters lack physical access to the hardware; the additional security of a “hash mesh” isn’t really present under this model accordingly.

Time Is Not On Your Side

One subtle attack on this hash chaining scheme: if you’re sufficiently motivated you can pre-compute them. While far from a sure thing, with sufficient analytic data to predict about when users will vote, how likely they are to vote, challenge, and so on you could run a large number of fake elections “offline” and then try and get away with publishing one that deviates the least (in terms of time and challenged ballots) from reality. With the same information and considerably more resources you could calculate fake elections on the fly starting from the currently accepted reality and try and steer things that way. Since the entities involved typically have lots of computing resources, and elastic computing being widely available, we have to mitigate this, admittedly unlikely, but not impossible attack.

The problem in both cases is time. An attacker has a lot of it before an election (when the voter roll may not be known, but can probably be guessed) and a fair amount of it during one.

We can completely eliminate the time before an election by using a random root message in the hash chain. Naturally we can’t trust the system to give us a randomly generated number, but if they commit to using something public and uncontrollable as the root message then all’s well. An example would be to use the front page headline of some national newspapers (say the New York Times, USA Today, and the Wall Street Journal; in that order) from the day the election starts as the root message. Since the root block is essentially impossible to know, faking a hash chain is no longer easier given the aforementioned analytic data.

To make attacking the hash chain during the election difficult we could introduce a random source for keep-alive payloads, like twitter messages from a Beiber search. More important would be selecting a hash function that is both memory and time hard, such as scrypt. Either is probably sufficient, but scrypt (or similar) would be preferable to Beiber if you had to choose.

Tallying Bananas

Thus far we’ve mostly concerned ourselves with individual ballots being tampered with, but what about the final results? With what’s been discussed thus far, it’s still entirely possible that everyone votes, challenges, and audits to their hearts’ content and at the end of the election totally bogus results are announced.

The problem is that the ballots that count are necessarily opaque (they have to be encrypted clearly, even if we are publishing them), only the challenged ones have been revealed.

There do exist encryption schemes that can work around this. Namely, any homomorphically additive encryption function; in other words, any encryption function E(x) for which there exist a function F(x1, x2) such that F(E(a), E(b)) = E(a+b). Two such schemes are (a modified) ElGamal and Paillier (others exists), the ephemeral keys present in ElGamal are useful for our ballot challenge scheme.

Encrypting ballots with ElGamal and publishing them allows anyone to tally the results of the election. The only constraint imposed by this system is that the voting system being used must be amenable to its ballots being represented by tuples of 1s and 0s; not a big hurdle, but something to be aware of.

Another subtle attack, since ballots are opaque nothing prevents a ballot from containing something other than 0 or 1 for a slot in a “race” (or all 1s, or any other invalid combination). Some more crypto-magic in the form of zero knowledge proofs (specifically non-interactive ones) attached to a ballot for every “race” it contains gives us confidence (technically not 100%, but we can get arbitrarily close to it) that every ballot is well-formed. The exact construction is complicated (and a bit beyond me, honestly), but the Adder system [Kiayias, Korman, Walluck] has provided a reference implementation for ElGamal (adapted into VoteBox, the Adder source seems to have disappeared).

There’s one last elephant in the room with this system. Although everyone can tally the votes, and everyone can verify each individual vote is well-formed, those running the election are still the only ones who can decrypt the final results (they’re the only ones who have the ElGamal private key). There honestly isn’t a fool-proof solution to this that I’m aware of, as revealing the private key allows for individual ballots to be decrypted in addition to the tally. Depending on community this may be acceptable (ie. ballot privacy may only matter during the election), but that is more than likely not the case. If there is a trusted third party, having them hold in the key in escrow for the duration of the election and then verify and decrypt the tally. In the absence of a single third-party, a threshold scheme for revealing the private key may be acceptable.

Altogether Now

In short, the proposed system works like so:

- Prior to the election starting, a full roll of eligible voters is published

- When the election starts, a hash chain is started with an agreed upon (but random) first message

- The hash chain uses a memory and time hard hash function

- A public/private key pair for a homomorphically additive encryption system is stored in an agreed upon manner

- Optionally, throughout the election agreed upon (but random) messages are added to the hash chain as keep-alives

When a user votes:

- Their selections are encrypted using the previously generated public key

- A NIZK is attached to the ballot to prove its well-formedness

- An identifying token is also attached to the ballot to check against the voter roll

- All this data is pushed to the hash chain as a single message

- The voter then chooses to either commit or challenge their ballot

- If they commit, a message is added to the hash chain to this effect

- If they challenge, the ephemeral key for the ballot is published; allowing any third-party to decrypt the ballot, letting the voter prove the election is being run in good faith (they may then cast another ballot)

When the election ends:

- Some pre-agreed upon message is placed in the hash chain

- It’s not important this message be random, it’s just a signal that those watching can stop as the chain is finished

- Anyone who wishes to can verify the integrity of the published hash chain, along with the integrity of individual ballots

- Anyone who wishes to may tally the votes by exploiting the homomorphic properties of the chosen encryption scheme

- Whichever approach to decrypting the tally has been chosen is undertaken, concluding the election

Improvements

The obvious place to focus on improving is the final decryption. My gut (with all the weight of a Bachelor’s in Computer Science, so not a lot of weight) tells me there should be a way to construct a proof that the announced tally actually comes from decrypting the summed ciphertexts. Or perhaps there’s some way to exploit the one-to-many nature of ElGamal encryption to provide (in advance of the election naturally) a function that will transform the tally ciphertext into one a different key can decrypt, but have that transformation not function on the individual ballots.

Of course, as I stated before, the status quo is acceptable. It’s just fun to think of what we could build to deal with the theoretical problems.